Researchers from the University of Bonn in Germany have developed a software that can look into the future. The program first learns a series of actions by watching videos. From its analysis of the steps taken it will then predict what will happen next. It may sound creepy at first but its implications at home, in industrial use or in any other sector is astounding.

Predictive Robots at Home and at Work

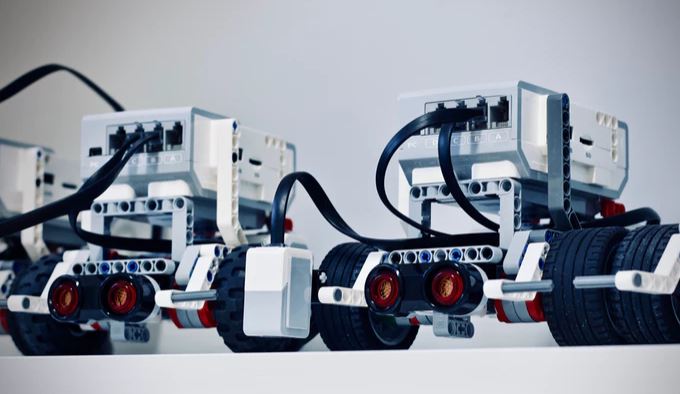

Imagine having robots who know your routine or have a wide library of knowledge on how things are done. With a kitchen robot, it would be like having a sous chef preparing things before you ask for them. The robot can detect what meal you are trying to create and will preheat the oven or pass you ingredients without you having to command it.

For maintenance work, the robot can analyze what you are trying to accomplish and can remind you of tools or steps needed to successfully complete the job.

The software might even save you from watching a bad movie. It can look into a movie a few minutes in and predict upcoming actions such as fight scenes, kissing scenes and more.

For now, though, the software is still in its infancy stage and has only been used to predict actions when making a salad. Though young, its highest accuracy rate is above 40%.

How the Software Came to Be

The software was developed by a group of computer scientists led by Dr. Jürgen Gall. Dr. Gall explains that they made the self-learning software with the aim of predicting the timing and duration of activities before they happen.

The software was put under a training period where it was given 40 videos – around 6 minutes long – about making salads. From this training period, the software studied the actions involved with making a salad, the typical sequence of the actions involved and the average duration of each action.

The University of Bonn publication explains that this is no small feat as every chef has his own approach and the sequence of actions depends on the recipe.

After the training period, Gall’s team tested how successful the software’s learning process was. They showed the software videos it has not seen before, informed the software of the actions that took place in the first 20-30% of a video, then asked it to predict what would happen next.

The team set the test’s parameters to only consider a prognosis correct if the computer predicts both the activity and its timing accurately. (A tough test even for humans with knowledge on cooking salad, I dare say.) The software performed well, nonetheless, gaining an accuracy rate of over 40% for short forecast periods. For activities beyond three minutes, however, the software’s accuracy dropped. But it is still worth noting that the computer still gave correct answers in 15% of the cases.

Gall and his colleagues wish the public to understand that this is only the first step into action prediction. What we can hope for the researchers to develop next is for the software to predict events with high accuracy without being “told” what happened in the first parts and for the software to make accurate predictions for longer forecast periods.

For now, I just wish that this software will soon have a name to make it easier to talk about (read: geek about) with friends.